Knowledge 4 All Foundation Supports the International Winter AI Olympiad 2026

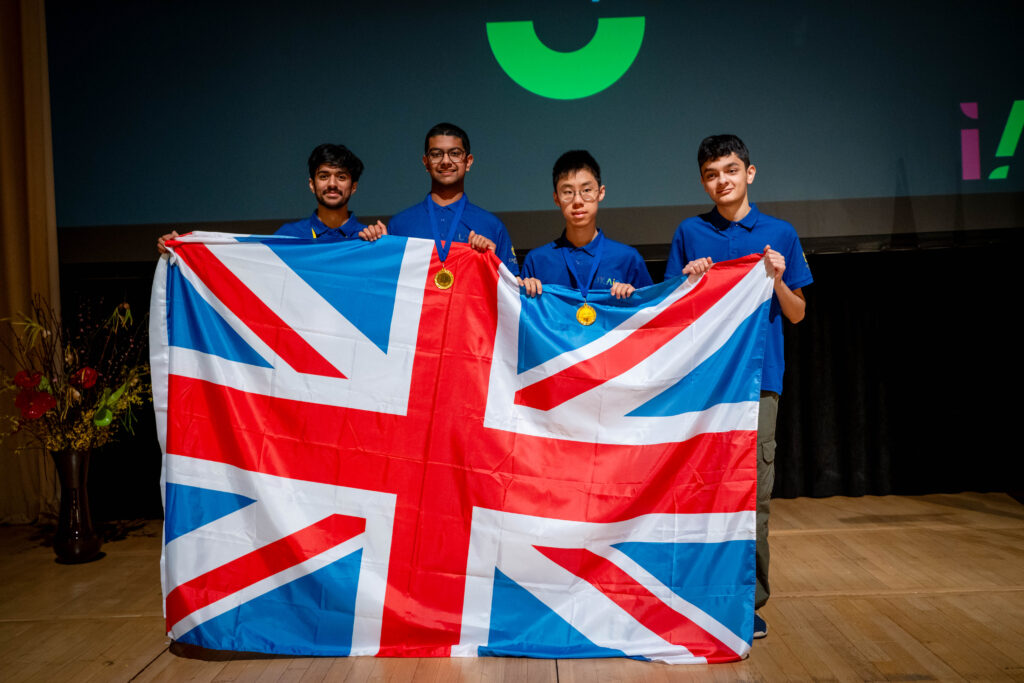

The International Winter AI Olympiad (IAIO) 2026, held from 23–27 February 2026 in Ljubljana, Slovenia, brought together some of the world’s most promising young artificial intelligence talents for a week of intense competition, collaboration, and learning. Organized by the International Research Centre on Artificial Intelligence (IRCAI), the event welcomed over 100 high-school students from 26 countries, all winners of their respective national competitions.

Participants competed in a series of challenging theoretical and practical AI tasks designed to test their understanding of machine learning concepts, algorithms, and real-world applications of artificial intelligence. The Olympiad combined rigorous academic problem-solving with opportunities for international exchange, allowing students to learn from peers and mentors from around the world.

The Knowledge 4 All Foundation, as one of the event’s sponsors, proudly supported this initiative aimed at nurturing the next generation of AI talent. By enabling opportunities for young researchers and students to test their skills on an international stage, the Foundation contributes to building a more inclusive and globally connected AI ecosystem.

Events like the International Winter AI Olympiad play a vital role in inspiring young minds to pursue careers in artificial intelligence and technology. The Knowledge 4 All Foundation remains committed to supporting educational initiatives that empower talented students worldwide and foster innovation for the benefit of society.